OVIE — One View Is Enough!

Monocular Training for In-the-Wild Novel View Generation

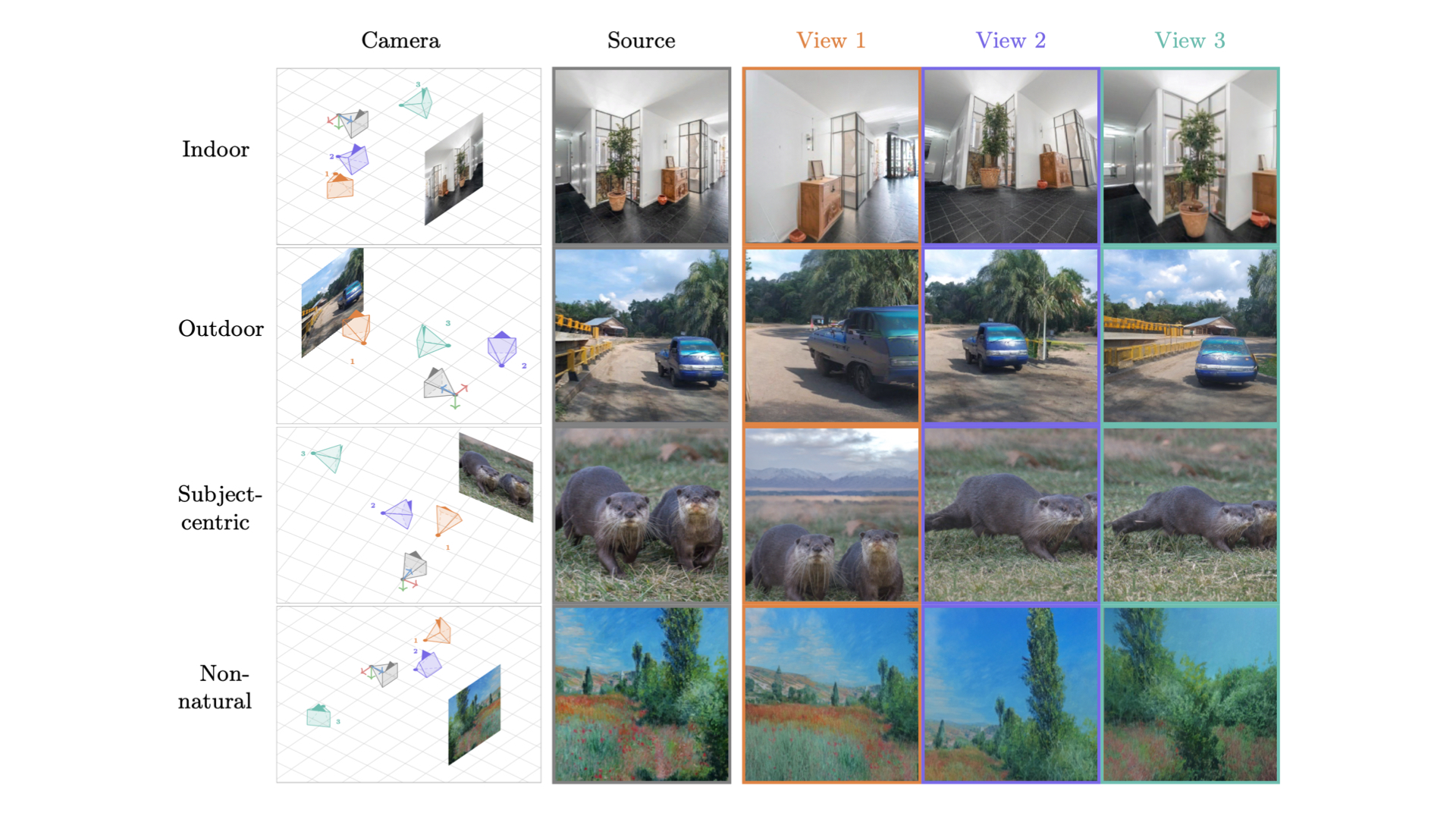

OVIE is a novel view synthesis model that generates a new viewpoint of a scene from a single image and a target camera pose. Unlike most prior work, it is trained entirely on unpaired in-the-wild images — no multi-view supervision required.

Model architecture

OVIE is a convolutional encoder–decoder with a Vision Transformer (ViT) bottleneck conditioned on camera parameters via adaptive layer normalisation (AdaLN):

- Encoder: cascaded downsampling ConvBlocks (3 scales)

- Bottleneck: 12-layer ViT (hidden size 768, 12 heads) with AdaLN camera conditioning

- Decoder: cascaded upsampling ConvBlocks (3 scales)

- Camera conditioning: a 7-dimensional pose encoding (rotation + translation) projected into the ViT hidden space

- Parameters: ~143M

Usage

import torch

from models.models import OVIEModel

from utils.pose_enc import extri_intri_to_pose_encoding

from torchvision.transforms import ToTensor

from PIL import Image

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# Load model

model = OVIEModel.from_pretrained("kyutai/ovie", revision="v1.0").to(device)

model.eval()

image_size = model.image_size # 256

# Prepare input image

img_pil = Image.open("image.jpg").convert("RGB").resize((image_size, image_size))

img_tensor = ToTensor()(img_pil).unsqueeze(0).to(device)

# Define target camera pose (3x4 extrinsics)

extrinsics = torch.tensor([[[1.0, 0.0, 0.0, -1.25],

[0.0, 1.0, 0.0, 0.5],

[0.0, 0.0, 1.0, -2.0]]], device=device)

dummy_intrinsics = torch.zeros(1, 1, 3, 3, device=device)

camera = extri_intri_to_pose_encoding(

extrinsics=extrinsics.unsqueeze(0),

intrinsics=dummy_intrinsics,

image_size_hw=(image_size, image_size),

)

cam_token = camera[..., :7].squeeze(0)

# Generate novel view

with torch.no_grad():

pred = model(x=img_tensor, cam_params=cam_token)

# pred: (1, 3, 256, 256) tensor in [0, 1]

See the repository for full installation instructions and example notebooks:

inference_huggingface.ipynb— loads directly from this Hub pageinference_local.ipynb— loads from a local checkpoint

Training

OVIE is trained on a diverse mix of in-the-wild internet images (ImageNet, Places365, OSV5M, OpenImages) with no multi-view pairs. Training uses a combination of L2 reconstruction loss, LPIPS perceptual loss, and an adversarial loss with a DINO-based discriminator. Camera poses are sampled synthetically from a distribution of plausible viewpoint changes.

Evaluation

The model is evaluated on DL3DV and Real Estate 10K (RE10K) using PSNR, SSIM, and LPIPS. See the paper for full quantitative results.

Citation

@misc{ovie2026,

title={One View Is Enough! Monocular Training for In-the-Wild Novel View Generation},

author={Adrien Ramanana Rahary and Nicolas Dufour and Patrick Perez and David Picard},

year={2026},

eprint={2603.23488},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2603.23488},

}

- Downloads last month

- 422